Ideally, when designing an educational program, program-related decisions should be based on evidence of effectiveness. Evidence can be found in various places (e.g., websites, published effectiveness research, conference presentations). However, there is no guarantee that the information found in those sources is credible. In addition, looking for evidence in individual sources can be daunting, especially if not trained in such a task. Therefore, we should search for credible evidence in a manner that is efficient. Here are some essential questions to consider in this process:

- Where can we find high-quality information regarding effective programming?

- How can we determine what scholarship provides credible evidence of effectiveness versus (mis)information that should be ignored?

- How should we summarize the existing credible evidence to inform our educational programming decisions?

Credible Evidence: What is it? Why is it important?

Credible evidence for program effectiveness claims is evidence that is transparent and has been rigorously examined through robust, unbiased experimental design, analysis, reporting of results, and interpretation. Experimental design is the most important characteristic when determining the kind of inferences that can be made regarding program effectiveness.

For example, let’s imagine that we would like to know whether students experiencing a program (e.g., Alternative Spring Break) are more likely to achieve outcomes of interest than students not experiencing a program. At a minimum, answering such a question would require at least two groups of students: one group experiencing the program and one group not experiencing the program. Particular data collection designs (i.e., randomized controlled trials, quasi-experimental designs, which are described below) allow us to attribute changes in outcomes of interest to the intended program. If an experimental design is not used, causal claims regarding a program are limited or cannot be made. Hence, evidence from studies using experimental designs is the most credible for program effectiveness inferences.

Pyramid of Evidence for Program Effectiveness Inferences

The pyramid of evidence for program effectiveness inferences is a valuable resource for educators to understand the relation between credibility claims and research design. The pyramid of evidence is a schema that ranks evidence based on credibility. As shown in the pyramid below, systematic reviews are at the top, meaning evidence from such reviews provides the most credible claims. On the contrary, claims based on information from the bottom part of the pyramid (i.e., expert opinion, background information) are the least credible.

“Best Available” Research Evidence: What is it?

Although we should aim to find highly credible evidence (i.e., well-conducted systematic reviews), it may not exist. Therefore, we need to find the "best available" evidence. "Best-available" evidence is the most credible evidence that currently exists. To gather the "best-available" evidence, we should ask and answer the following questions:

- How much research has been conducted to evaluate the effectiveness of the program?

- What effects does the program have on particular outcomes?

- How well does the design of the various studies (e.g., randomized control trials, quasi-experimental designs) support the validity of findings (Can the outcomes truly be attributed to the program?)

- What implementation fidelity resources are available?

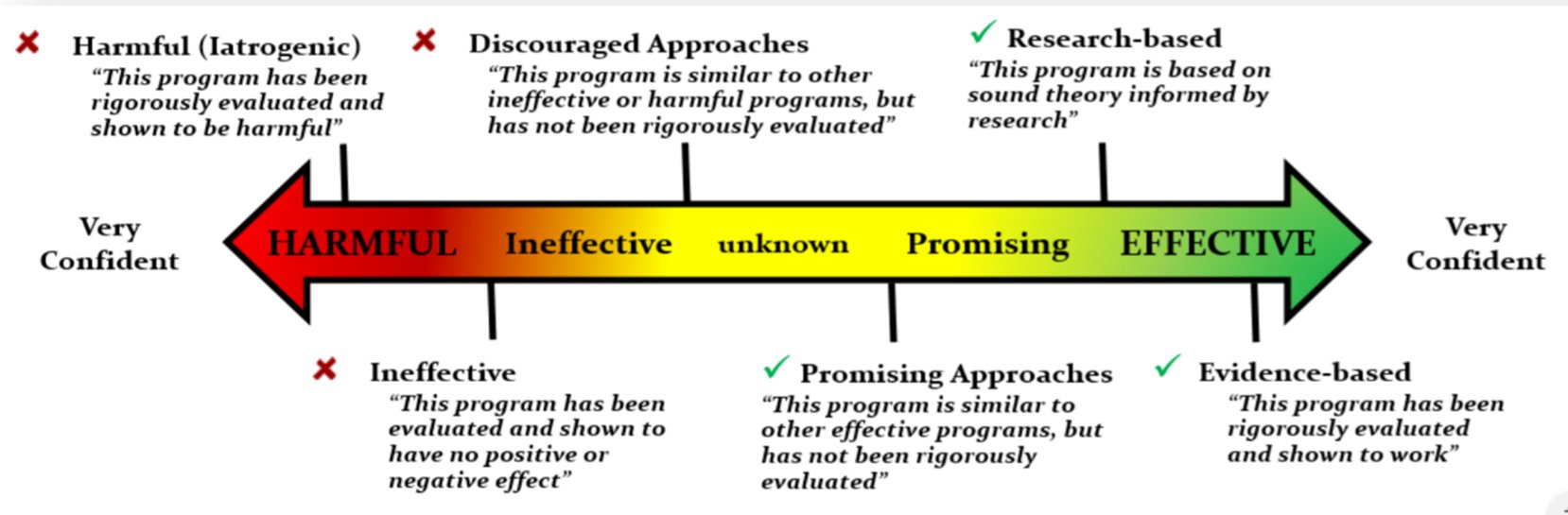

Because building such evidence takes a lot of time and resources, the best-available evidence at a point in time may be weak or not credible. That being said, the concept of “best-available” evidence acknowledges that evidence is continuously being gathered. Hence, weak or non-credible evidence at a point in time (e.g., “unknown” or “promising”) may be replaced with more credible evidence as time goes on (e.g., “effective”). Refer to the “Continuum of Evidence of Effectiveness” below.

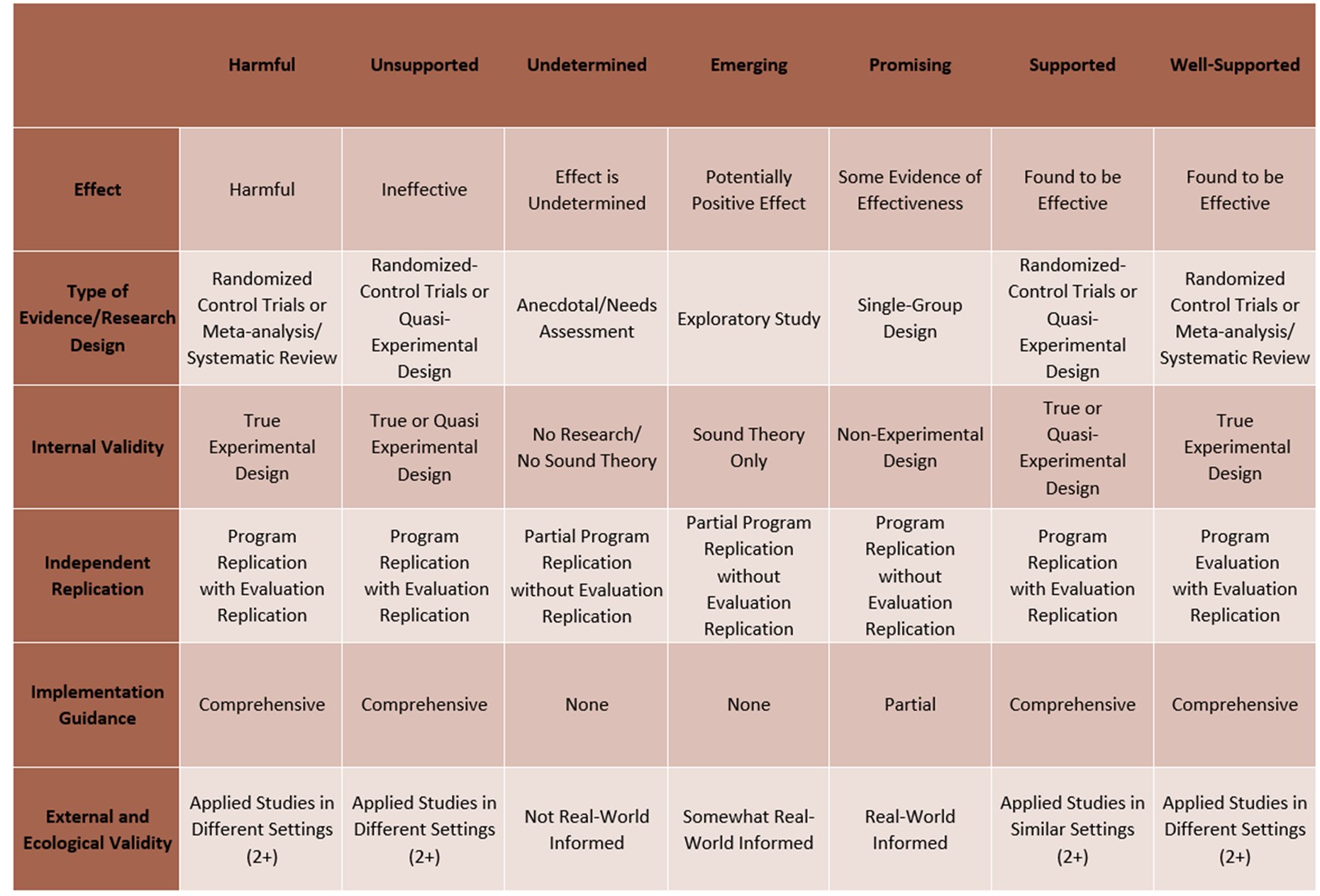

Credible Evidence Characteristics

Click image to enlarge

View legend for the terms used in the table

“Best-Available Evidence”: Where to find it?

As said previously, best-available evidence can be searched for in individual sources (e.g., individual published studies). However, such a search may not be the most efficient. Before looking through a mountain of individual sources, it is helpful to examine collections of evidence (preferably that are high-quality and professionally reviewed).

Systematic Review Repositories

Systematic reviews found in journals may not always be of the highest quality. Some reviews do not meet rigorous scientific criteria (for more information on scientific criteria that should be used in systematic reviews, visit Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement). However, systematic reviews found in systematic review repositories (i.e., online databases containing collections of systematic reviews) have been rigorously evaluated. They only include high-quality systematic reviews (see Applying What Works Clearing House Standards as an example of the rigorous review process used in systematic review repositories).

Three well-known organizations conduct high-quality systematic reviews relevant to higher education:

- Campbell Collaboration (education, crime, welfare)

- The Cochrane Library (health)

- What Works Clearing House of the U.S. Department of Education (WWC)

Other useful systematic review repositories can be found in Repositories of Effectiveness Studies which is included in Finney & Buchanan (2021). This article and resource provide information on systematic reviews and systematic review repositories to support employing evidence-informed programming in higher education.

“Wise Interventions” Database

Not all collections of studies meet the rigorous set of criteria used in systematic reviews. Yet, these collections can still provide useful information. We recommend a curated resource by Greg Walton and colleagues. “Wise Interventions” are short yet powerful interventions that impact behavior, self-control, belonging, achievement, and other higher education related outcomes. They target underlying psychological processes, which influence the outcome(s) of interest. Although this resource is not a collection of randomized controlled trials (as in systematic reviews), it provides valuable information to effectively impact intended outcomes.

Individual Studies

We may not find existing systematic reviews evaluating programming aligned with intended outcomes. If that happens, we need to examine individual empirical studies of programs and combine findings to inform effectiveness statements. Such studies can be individual experimental studies (i.e., randomized-control trials, quasi-experimental designs) or non-experimental studies (i.e., observational studies, surveys, interviews, focus groups, case studies). Findings can be found in peer-reviewed journals but being published does not indicate that studies or results can be trusted. Therefore, we need to evaluate the quality of the results before using them in the development or evaluation of our program (further emphasizing the utility of systematic reviews where this combination of high-quality research is done for us). We recommend reaching out to your librarian to conduct such searches.

Additional Resources

Video: Systematic Review Repositories In Brief

Clearinghouse (Consolidates Several Databases of Evidence-Informed Programs): PEW Charitable Trusts Results First Clearinghouse Database

Article (Guide on Evidence-Informed Programming + Resources): Finney & Buchanan (2021)

Article (Evaluating the Credibility of Evidence): Horst, Finney, Prendergast, Pope & Crewe

Website (Library Resource): Systematic Reviews