The next two steps of the Assessment Cycle are critical. We must use a data collection design that supports the inferences we intend to make as specified by our student learning and development outcomes (SLOs). Then we must appropriately analyze the results to draw accurate conclusions. During these steps of the process, you will need to consider questions such as:

- Who or what do you want to make inferences about?

- Who will be assessed?

- What data collection design should be used based on your SLOs?

- When, where, and how will you collect the data?

- Are there threats to the validity of the inferences you want to make?

Match Between SLOs and Data Collection Design

After specifying specific, measurable SLOs, creating programming intended to influence student learning and development with respect to the SLOs, and selecting or designing instruments, we need to design a data collection plan that supports the inferences we intend to make, as articulated in the SLOs. Thus, your SLOs should dictate the type of data collection design you implement.

Data Collection Designs

Longitudinal

Data are collected from the same group(s) of students at more than one time point with longitudinal designs. Although longitudinal data may be more difficult and time consuming to collect, this design allows for inferences about individual or average change over a period of time/over the course of a program. For example, you may want to see how students develop or change over the course of a semester.

- The following SLO suggests a longitudinal design is needed: After completing Transfer Student Orientation, transfer students will be able to list 4 more academic resources on campus than before Transfer Student Orientation.

Cross Sectional

Cross-sectional designs are typically used to make comparisons between multiple groups. For example, you may want to examine average differences in skills between a group participating in a program and a group not participating in a program (i.e., control group). Or, you may want to evaluate the relation between first-year students’ participation in co-curricular activities and their GPA.

- The following SLO suggests a cross-sectional design is needed: As a result of completing Transfer Student Orientation, incoming transfer students will be able to list 4 more academic resources on campus than students who did not complete Transfer Student Orientation.

Single Group/Single Time Point

Data are collected from a single group of students at a single time point. These designs are often appropriate when one wants to make interpretations relative to some criteria. For example, you may want to determine how many, or what percentage of students meet a specified cutoff.

- The following SLO suggests a single group/single time point design is needed: As a result of completing Transfer Student Orientation, incoming transfer students will be able to list 4 academic resources on campus.

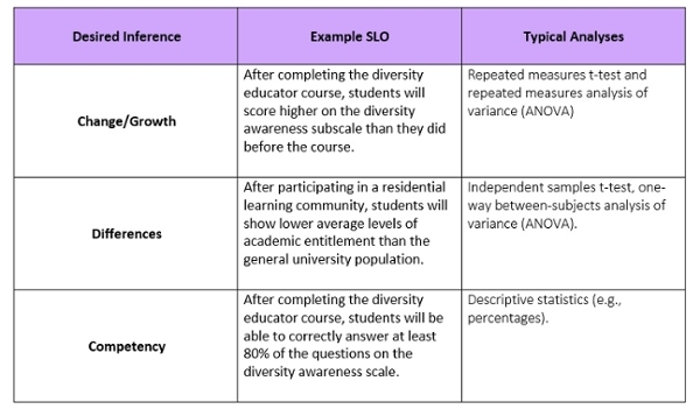

Match Between Desired Inferences, SLOs, and Analyses

Not only do your SLOs dictate the data collection design, but they also define the type of inferences you are able to make. For example, do you want to make inferences about group differences, student change/growth, relations between variables, or student competency? These types of inferences should be articulated in the SLOs. In addition, SLOs and inferences desired inform the type of analyses used to evaluate assessment results.

Threats to Validity

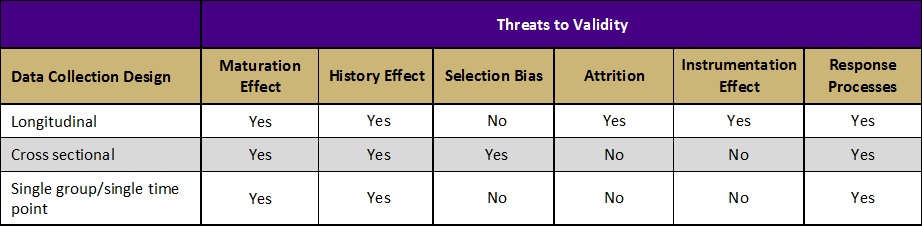

Validity refers to the degree to which evidence supports the intended interpretation of assessment results. There are several factors that can threaten the validity of the inferences we make from assessment results. Each data collection design either protects against or is susceptible to different threats to validity. Some of the most common threats to validity include:

Maturation Effect: The observed effect is due to students’ normal developmental processes or changes over time, not the program.

- Example: Program A (implemented during the first semester of college) claims to have increased students’ sense of independence. However, studies show students naturally gain more independence during their first semester of college even without an intervention.

Selection Bias: The observed difference between two groups at posttest is not due to the program, but to preexisting differences between the groups.

- Example: Facilitators of Program C compare students who participated in their service learning program to students who did not participate and are pleased to find that their students are higher in civic engagement—clear evidence that the program works! Upon further investigation, however, they discover that students high in civic engagement were more likely to participate in their program in the first place. Thus, the difference between the groups was due to self-selection into the program, not the program’s effectiveness.

Response Processes: Results cannot be trusted to reflect students’ true ability because they are impacted by things like socially desirable responding and low motivation.

- Example: After completing a 6-hour alcohol prevention workshop, students are fatigued and ready to leave. Unsurprisingly, when asked to complete a 100-item posttest (the only thing separating them from freedom) they speed through the test—responding randomly to the questions. Subsequent posttest results indicate students gained nothing from the workshop. Should these results be trusted?

Learn more about the threats to validity outlined in the table above

Integrating AI (Collecting Data)

While AI can’t physically collect data, it can help you plan your data collection strategy and anticipate potential barriers.

As an educator, how and when would you collect outcomes data to best understand student learning and development?

ChatGPT and Copilot Chat can:

- Help you think through the logistics and timing of your data collection plan.

- Brainstorm data collection formats based on your student population and program context.

- Draft language for online surveys, paper assessments, or in-person interview protocols.

How does the data collection design align with claims you hope to make about student outcomes and program effectiveness?

Copilot Chat and ChatGPT can help you reflect on your design choice and its implications. You can prompt with:

- Does a posttest data collection design allow me to make claims that align with my outcomes? (paste outcomes)

- Does my current data collection design allow me to attribute change in outcomes to my programming?

!!! Note: While AI can help you plan and articulate your approach, all final decisions about design and timing should be reviewed by experts in your department or in assessment. !!!