|

|

| Points of Contact: | Tom DeVore (540.568.6672) Isaiah Sumner (540.568.6670) |

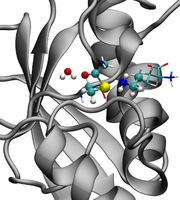

Computation has become an indispensable tool for the modern chemist. Simulations of chemical and biochemical processes routinely provide atomic-level detail that is difficult or impossible for traditional experimental techniques to achieve. Therefore, the JMU Department of Chemistry and Biochemistry operates one high performance computing cluster dedicated to computational chemistry: Cagliostro.

Cagliostro is a hybrid GPU/CPU cluster from Exxact. It is composed of five compute nodes and one head node. Each compute node two, 2.4GHz six-core Intel Xeon CPUs, four NVidia GeForce GTX 980 GPUs and 32 GB of memory. The head node also has two, 2.4GHz six-core Intel Xeon CPUs and 32 GB of memory. In total Cagliostro has 10TB of shared disk space and the cluster is parallelized with a 10 GbE interconnect.

The College of Science and Mathemtics also built a computing cluster in 2023, which is located in the Frye Building. It is currenty being beta tested.

Software running on these cluster includes Gaussian09, AmberMD, CP2K, CFour, GaussView5 and VMD enabling calculations for electronic structure, molecular dynamics, hybrid quantum mechanics/molecular mechanics (QM/MM) and molecular visualization manipulation and analysis.